Amazon Web Services: Background

Amazon Web Services (AWS) have revolutionised the way we view IT provisioning. AWS makes so many things easier, and often cheaper too. The benefits scale from the SME right up to corporates; no segment is left out. Complexity is abstracted away, and with a little effort, large and/or complex systems can be built with a few clicks and some configuration.

Architecture

Architecture

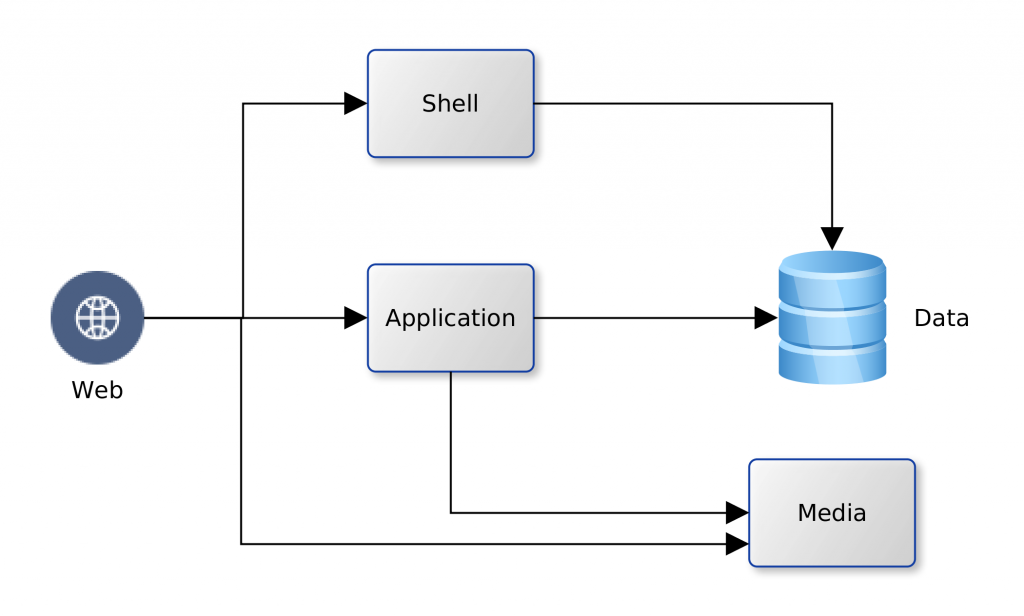

We decided to take a quick look and see just how much the AWS could offer low-budget SMEs. Using our company’s existing platform as the subject. We have one Oracle database and a handful of MySQL databases; an application server and a Web Server fronting for the application server and several CMS-driven sites. The application server runs Java web services that use data from the Oracle database. The web server hosts the pages for the Java application. It also servers a number of WordPress/PHP sites that run on data from the MySQL databases. The logical view is illustrated in the diagram below:

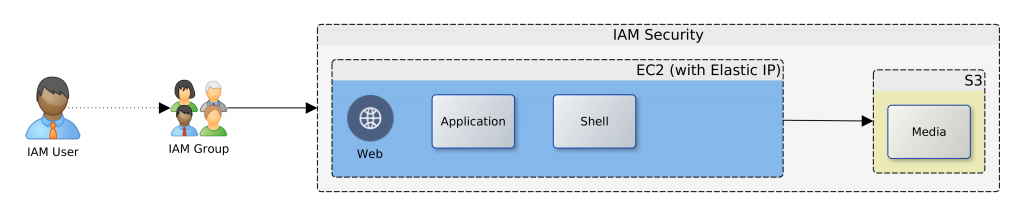

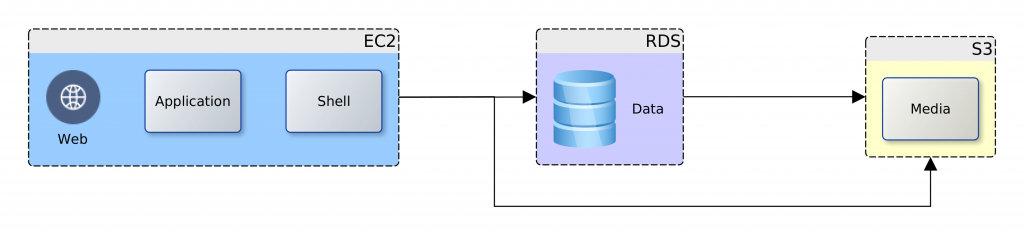

We could map the logical view to one-to-one service units in AWS, or rationalise the target resources used. AWS provides services for computation for web and application (EC2) Shell scripting (OpsWorks), data (RDS) and static web and media (S3), and other useful features; Elastic IP, Lambda, IAM. So, we have the option to map each of the logical components to an individual AWS service. This would give us the most flexible deployment and unrivalled NFR guarantees of security, availability and recoverability. However, there would be a cost impact, increased complexity, and there could be issues with performance.

We could map the logical view to one-to-one service units in AWS, or rationalise the target resources used. AWS provides services for computation for web and application (EC2) Shell scripting (OpsWorks), data (RDS) and static web and media (S3), and other useful features; Elastic IP, Lambda, IAM. So, we have the option to map each of the logical components to an individual AWS service. This would give us the most flexible deployment and unrivalled NFR guarantees of security, availability and recoverability. However, there would be a cost impact, increased complexity, and there could be issues with performance.

Solutions

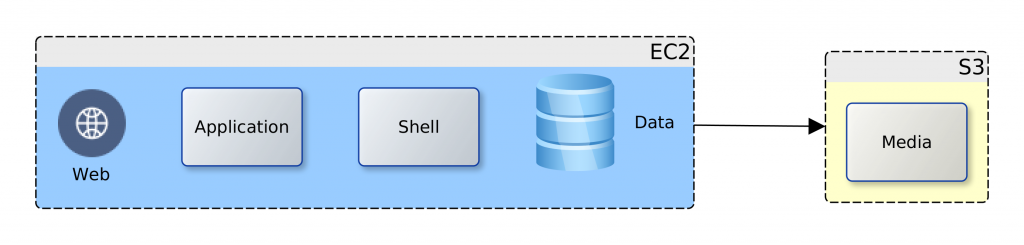

Going back to our business case and project drivers; cost reduction is highlighted. After some consideration two deployment options were produced (below), and we therefore chose the consolidated view. The Web, application and data components were targeted at the EC2 instance as they all require computation facilities. All the media files were targeted at the S3 bucket. The database data files could have been located on the S3 bucket but for the issue of latency, and costs that would accumulate from repeated access.

The media files were targeted to the S3 bucket due to their number/size (several Gbs). The separation ensures that the choice of EC2 instance is not unduly influenced by storage requirements. The consolidated view allows us to taste-and-see; starting small and simple. Over time we will monitor, review and if need be, scale-up or scale-out to address any observed weaknesses.

The media files were targeted to the S3 bucket due to their number/size (several Gbs). The separation ensures that the choice of EC2 instance is not unduly influenced by storage requirements. The consolidated view allows us to taste-and-see; starting small and simple. Over time we will monitor, review and if need be, scale-up or scale-out to address any observed weaknesses.

Migration

Having decided on the target option, the next thing was to plan the migration from the existing production system. An outline of the plan follows:

- Copy resources (web, application, data, media) from production to a local machine – AWS staging machine

- Create the target AWS components – EC2 and S3, as well as an Elastic IP and the appropriate IAM users and security policies

- Transfer the media files to the S3 bucket

- Initialise the EC2 instance and update it with necessary software

- Transfer the web, application and data resources to the EC2 instance

- Switch DNS records to point at the new platform

- Evaluate the service in comparison to the old platform

Implementation

The time arrived to actualise the migration plan. A scripted approach was chosen as this allows us to verify and validate each step in detail before actual execution. Automation also provided a fast route to the status quo ante, should things go wrong. Once again we had the luxury of a number of options:

- Linux tools

- Ansible

- AWS script (Chef, bash, etc.)

Given the knowledge that we had in-house and the versatility of the operating system (OS) of the staging machine, Linux was chosen. Using a combination of AWS command line interface (CLI) tools for Linux, shell scripts, and the in-built ssh and scp tools the detailed migration plan was to be automated. Further elaboration of the migration plan into an executable schedule produced the following outline:

- Update S3 Bucket

- Copy all web resources (/var/www) from the staging machine to the S3 bucket

- Configure EC2 Instance

- Install necessary services: apt update, Apache, Tomcat, PHP, MySQL

- Add JK module to Apache, having replicated required JK configuration files from staging machine

- Enable SSL for Apache … having replicated required SSL certificate files

- Fix an incorrect default value in Apache’s ssl.conf

- Configure group for ownership of web server files www

- Configure file permissions in /var/www

- replicate MySQL dump file from staging machine

- Recreate MySQL databases, users, tables, etc.

- Restart services: MySQL, Tomcat, Apache

- Test PHP then remove the test script …/phpinfo.php

- Install the S3 mount tool

- Configure the S3 mount point

- Change owner, permissions on the S3 mounted directories and files – for Apache access

- Replicate application WAR file from staging machine

- Restart services: MySQL, Tomcat, Apache

- Finalise Cutover

- Update DNS records at the DNS registrar and flush caches

- Visit web and application server pages

Anonymised scripts here: base, extra

A few observations are worthy of note, regarding the use of S3. AWS needs to make money on storage. It should therefore not be surprising that updates to permissions/ownership, in addition to the expected read/write/update/delete, count towards usage. Access to the S3 mount point from the EC2 instance can be quite slow. But there is a workaround: use aggressive caching in the web and application servers. Caching also helps to reduce the ongoing costs of repeated reads to S3 since the cached files will be hosted on the EC2 instance. Depending on the time of day, uploads to S3 can be fast or very slow.

Change Management

The cut-over to the new AWS platform was smooth. The web and application server resources were immediately accessible with very good performance for the application server resources. Performance for the web sites with resources on S3 was average. Planning and preparation took about two days. The implementation of the scripts for migration automation took less than 30 minutes to complete. This excludes the time taken to upload files to the S3 bucket and update their ownership and permissions. Also excluded is the time taken to manually update the DNS records and flush local caches.

Overall, this has been a very successful project and it lends great confidence to further adoption of more solutions from the AWS platform.

The key project driver, cost-saving, was realised, with savings of about 50% in comparison with the existing dedicated hosting platform. Total cost of ownership (TCO) improves comparatively as time progresses. The greatest savings are in the S3 layer, and this might also improve with migration to RDS and Lightsail.

In the next instalment, we will be looking to extend our use of AWS from the present IaaS to PaaS. In particular, comparison of the provisioning and usability of AWS and Oracle cloud for database PaaS. Have a look here in the next couple of weeks for my update on that investigation.

—

Oyewole, Olanrewaju J (Mr.)

Internet Technologies Ltd.

lanre@net-technologies.com

www.net-technologies.com

Mobile: +44 793 920 3120