In a previous article, “One thousand servers, start with one click”, I described the design and implementation of a simple hybrid-Cloud infrastructure. The view was from a high level, and I intend, God willing, to delve into the detail in a later instalment. Before that though, I wanted to touch on the subject of hybrid Cloud security, briefly.

In a previous article, “One thousand servers, start with one click”, I described the design and implementation of a simple hybrid-Cloud infrastructure. The view was from a high level, and I intend, God willing, to delve into the detail in a later instalment. Before that though, I wanted to touch on the subject of hybrid Cloud security, briefly.

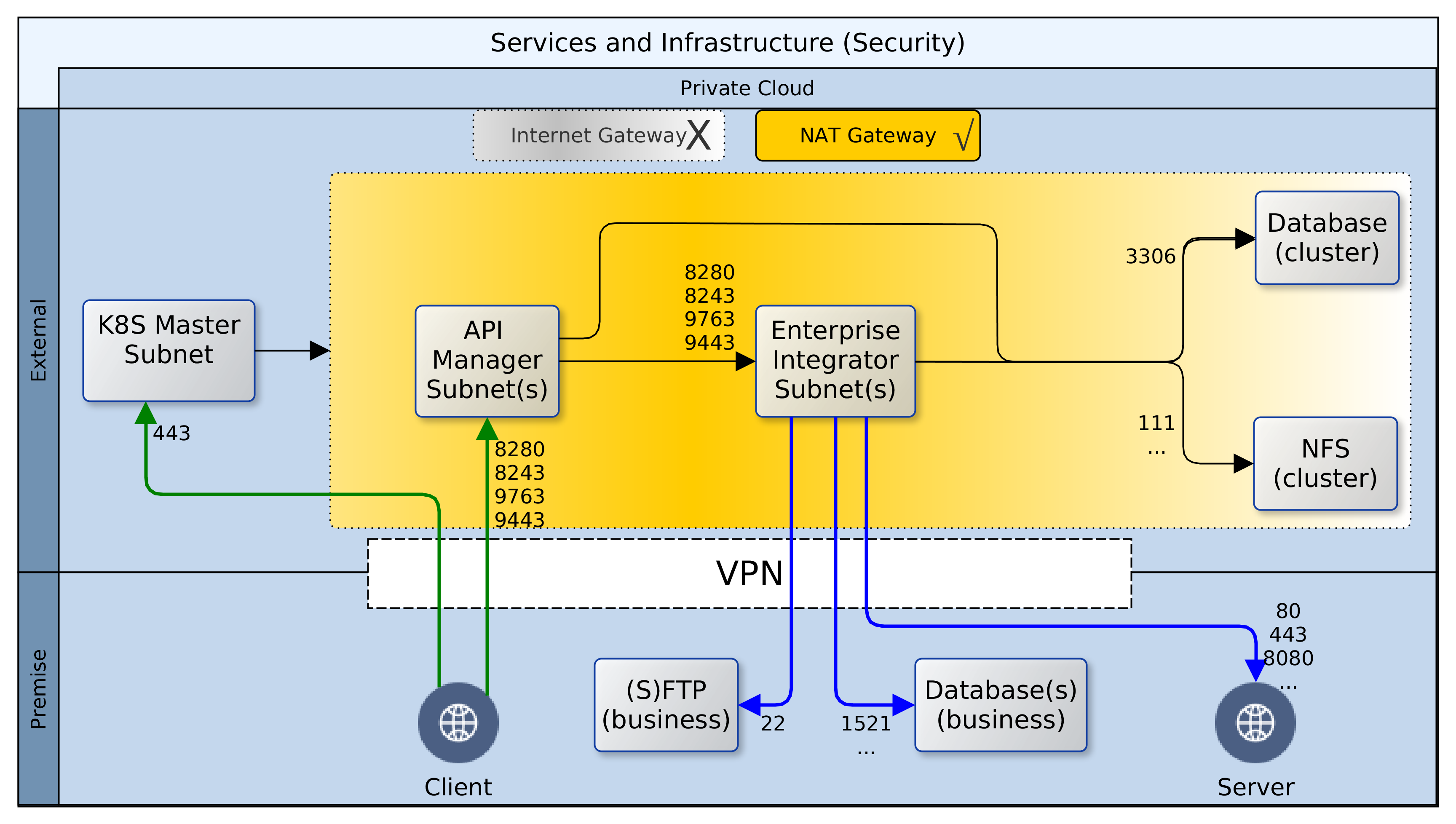

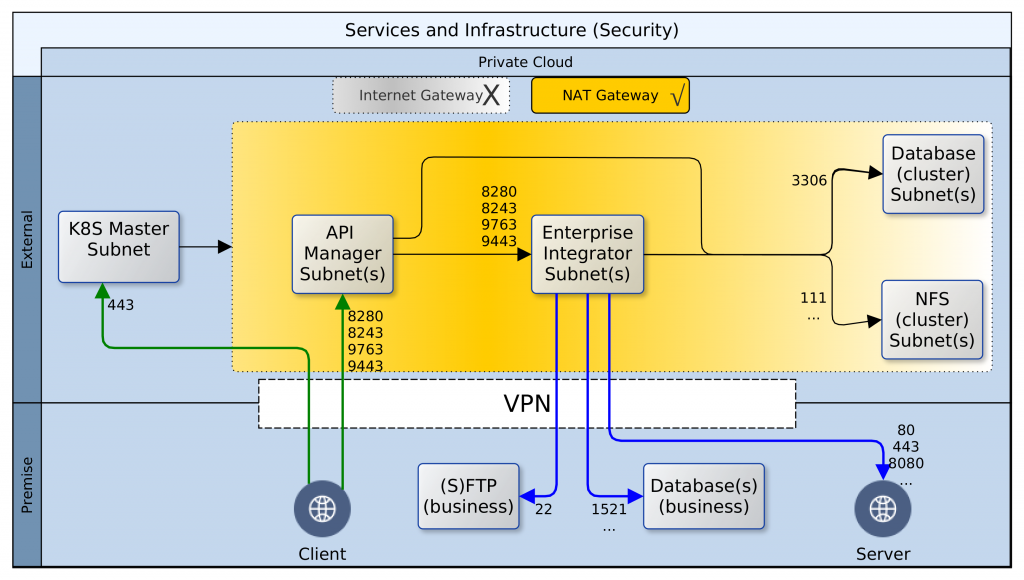

Having deployed resources in a private Cloud and on-premise, certain precautions should be taken to secure the perimeter and the green zone inside the two networks – Cloud and premise. The diagram above paints the big picture. It shows how, based on AWS security recommendations, minimal access/privilege is granted to each resource in the networks. The focus here is on machine access, which is about the lowest level of security. I will not delve into AWS policies, VPN configuration or on-premise firewall rules, as these are not black-and-white and the detail involved does not fit in with the goal for this article.

Here goes! Reading the diagram and translating to words:

- It is convenient to use the Internet Gateway (public router) of your Cloud provider during development. Once you are done with prototyping and debugging, it should be disabled or removed. Switch to a NAT gateway (egress only router) instead. Your servers can still connect to the outside world for patches, updates, etc. but you can control what sites are accessible from your firewall. Switching to a NAT gateway also means that opportunist snoopers are kept firmly out.

- Open up port 443 for your Kubernetes (K8S) master node(s) and close all others – your cluster can even survive without the master node, so don’t by shy, lock it down. Should the need arise, it is easy to temporarily change Cloud and premise firewall rules to open up port 22 (SSH) or others to investigate or resolve issues. Master nodes in K8S master subnet should have access to all subnets within the cluster, but this does not include known service ports for the servers or databases.

- While your ESB/EI will have several reusable/shared artefacts, the only one that are of interest to your clients (partners) are the API and PROXY services. For each one of these services, a Swagger definition should be created and imported into the API Manager (APIM). All clients should gain access to the ESB/EI only through the interfaces defined in the APIM, which can be constrained by security policies and monitored for analytics. Therefore, the known service access ports should be open to clients on the APIM, and as with the K8S master, all other ports should be locked down.

- Access to the known service ports on the ESB/EI should be limited to the APIM subnet(s) only, all other ports should be closed.

- The Jenkins CI/CD containers are also deployed to the same nodes as the ESB/EI servers, but they fall under different constraints. Ideally, the Jenkins server should be closed off completely to access from clients. It can be correctly configured to automatically run scheduled and ad-hoc jobs without supervision. If this is a challenge, the service access port should be kept open, but only to access from within the VPN, ideally, a jump-box.

- Access to the cluster databases should be limited to the APIM and ESB/EI subnets only, and further restricted to known service ports – 3306 or other configured port.

- Access to the cluster NFS should be limited to the APIM, ESB/EI, and K8S-master subnets only, and further restricted to known service ports – 111, 1110, 2049, etc., or others as configured.

- On-premise firewall rules should be configured to allow access to SFTP, database, application and web-servers from the ESB/EI server using their private IP addresses over the VPN.

- Wherever possible, all ingress traffic to the private Cloud should flow through the on-premise firewall and the VPN. One great benefit of this approach is that it limits exposure; there are fewer gateways to defend. There are costs though. Firstly, higher latencies are incurred for circuitous routing via the VPN rather than direct/faster routing through Cloud-provider gateways. Other costs include increased bandwidth usage on the VPN, additional load on DNS servers, maintenance of NAT records, and DNS synchronisation of dynamic changes to nodes within the cluster.

- ADDENDUM: Except for SFTP between the ESB/EI server and on-premise SFTP servers, SSH/port-22 access should be disabled. The Cloud infrastructure should be an on-demand, code-driven, pre-packaged environment; created and destroyed as and when needed.

And that’s all folks! Once again, this is not an exhaustive coverage on all the aspects of security required for this hybrid-Cloud. It is rather a quick run-through of the foundational provisions. The aim being to identify a few key provision that can be deployed very quickly and guarantee a good level of protection on day one. All of this builds on a principle adopted from AWS best practise. The principle states that AWS is responsible for the security of the Cloud while end-users are responsible for security in the Cloud. The end-user responsibility of Cloud security begins with another principle: access by least privileges. This means that for a given task, the minimum privileges should be granted to the most restricted community to which one or more parties (man or machine) is granted membership.

—

Oyewole, Olanrewaju J (Mr.)

Internet Technologies Ltd.

lanre@net-technologies.com

www.net-technologies.com

Mobile: +44 793 920 3120